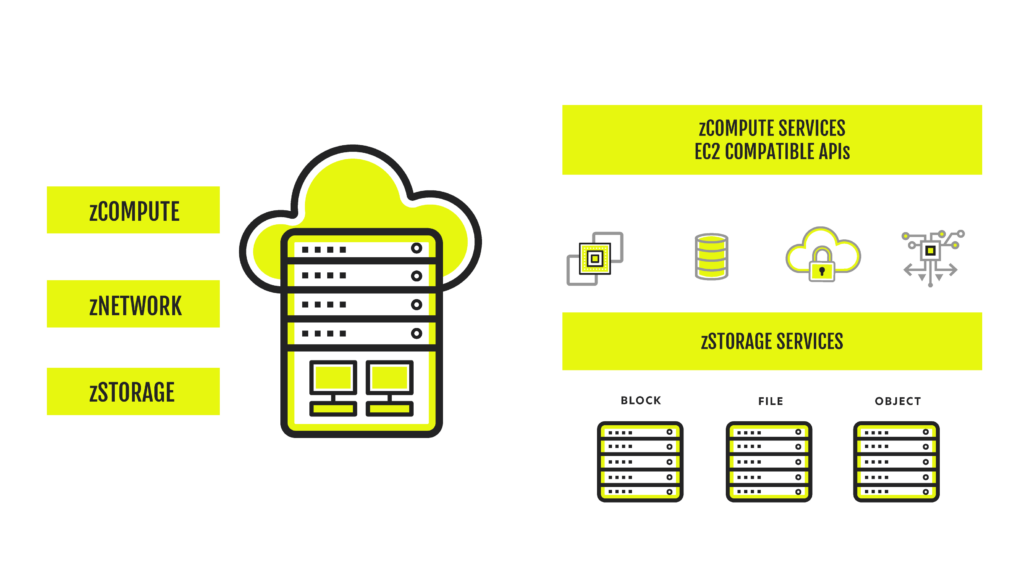

Fully-managed cloud services - compute, networking, storage and more - designed for service providers and the modern enterprise.

Access on-demand, enterprise-grade compute, networking and storage designed to handle any workload, anywhere – on-premises, hybrid, multi-cloud and at the edge. Get fully-managed, pay-for-use cloud services to lower your costs and future-proof your infrastructure.

Zero-risk, on-demand cloud services.

Improve your Cloud Services with Access to fully-managed IT infrastructure on demand. Only pay for what you use. Scale up, down or turn it off at any time. No long-term contract or CapEx hardware investments.

Hybrid-ready by design.

Simplify complex distributed infrastructure whether, on-prem, across multiple clouds or at the edge. Centralize your management capabilities and deliver the best price-performance ratio for any workload.

Global reach. Local appeal.

Deliver the performance and reliability your customers expect no matter the location. Offer low-latency edge services with Zadara’s existing fully-managed clouds or global base of 300+ MSP partners.

Trust your cloud.

Take control of your data with Zadara’s secure-by-design infrastructure, data protection solutions, and our global network of partners. Isolate your data with click-to-provision options for dedicated storage at the controller level.

Centralized and easy monitoring

Access Zadara’s simple dashboard based cloud management. Web-based interface to monitor your applications and infrastructure with visualized dashboards, automated monitoring and alerting and detailed reporting.

24/7/365 DevOps support.

Free your IT team from ongoing maintenance. Zadara delivers around-the-clock, proactive monitoring and support, and seamless upgrades, backed by our industry-best uptime SLAs.

Zadara Edge Cloud Locations

Zadara Edge Cloud Services